1. Introduction

2018 could be the year when there is an expansion in the provision of everyday services to public consumers using artificial intelligence (AI). Here, I would like to discuss not only the public acceptance of innovation, but also the role of standards from the viewpoint of promotion of products and services related to AI technology.

2. Social acceptance of new technology

It is expected that various products and services using AI-related technology will be developed in the future. At the same time, the social acceptance of technology requires consumers to engage with it in greater numbers. What measures can be taken to promote the acceptance of technology by consumers? Rogers's theoretical model captures the pattern of diffusion of new ideas [1]. According to this model, diffusion can generally be represented by a convex curve. Nevertheless, in the case of products or services based on new emerging technologies, such as those related to AI, the rate of initial dissemination seems to be relatively low.

The perception about products and services that use AI-related technology seems to be that computers process things faster and with greater accuracy and less bias than humans. The technology can avoid the inevitable fatigue, misunderstanding, or intentional bias to which a human being is susceptible. If we circumvent mistakes in usage in the appropriate field, the satisfaction among consumers for the services is expected to increase. On the other hand, users seem to find it difficult to distinguish whether a product or service is based on AI-related technology because the technology is associated with an information-processing method. This difficulty faced by users in recognizing technology can be an impediment to its diffusion and acceptance.

3. Characteristics of goods and services

How can we explain the phenomenon that certain goods and services are difficult for consumers to comprehend, whereas others are easy to understand? Goods and services can be categorized into several types. I would like to explain the theory of search goods, experience goods, and credence goods. Search goods are those products and services whose details and quality can be understood from information provided before purchase; a personal computer would be a typical example of such goods. One can reasonably explain the utility that the products will provide to consumers by reading performance data from a catalog. On the other hand, the value of experience goods cannot be understood without using one of these goods or services. Education and medical services fall under this category of goods. Education and medical services can be understood to some extent in advance, but we cannot know our level of satisfaction with the services without actually experiencing them.

The search goods and experience goods categories given here are not mutually exclusive in terms of concrete products and services. In many cases, search goods are considered to have the properties of experience goods. The approximate performance of a car can be understood from its promotional material, but the actual comfort is difficult to ascertain without taking a ride in the car.

With this in mind, how can we classify products and services that use AI-related technology? As mentioned earlier, AI- related technology is related to computational algorithms; if viewed from the outside, it is difficult to distinguish whether AI is even in use, or different from other technologies. The third category of goods, credence goods, comprises products and services whose internal information is not commonly detected even after using these products and are therefore difficult for consumers to understand (e.g., genetically modified food.) At present, it can be said that goods and services using AI-related technology generally have the characteristics of credence goods. It is difficult for consumers to tell whether or not a product contains this technology.

The spread of new technology to society may be delayed when information on goods and services is difficult to communicate to consumers. This example shows the difficulty faced when a new technology is released into society.

4. The role and challenges of standards in technology dissemination

A remedy to the difficulty described above is to provide information on the outside of the device that is visible to the consumer. In other words, affixing a label to the product can not only communicate whether AI-related technology is being used, but also allow consumers to decide whether to selectively choose and use it. This process is generally called labeling. To make this possible, it is necessary to determine criteria for applying appropriate labels. Thus, it is necessary to determine standards for AI-related technology.

It is thought that the formulation of such standards plays a substantial role in spreading new technologies. An effective standard includes necessary definitions of terminology, measurement methods, and safety stipulations. Establishing common frameworks of knowledge for the public related to the new technology promotes understanding and proliferation of the technology. For example, in the case of nanotechnology, the formulation of standards that provided clarity on basic concepts took place swiftly after the new technology emerged, and it is thought that these standards played a major role in its spread [2].

At present, technical classifications of AI-related technology are not clearly defined within international standards system, and the boundaries of this technology are considered to be ambiguous [3] [4].(1), (2), (3), (4) For promoting social acceptance of the technology in the future, establishing standards is considered an important issue needing resolution.

5. Summary

In order to promote social dissemination of AI-related technology, the difficulty in distinguishing between goods or services that actually use AI technology seems to be an issue that needs to be resolved. Standards and indications (artificial intelligence labeling(4), (5)) can be expected to play an effective role as one of the measures utilized toward solving this problem and promoting dissemination of the technology.

Note:

(1) As of December 2018

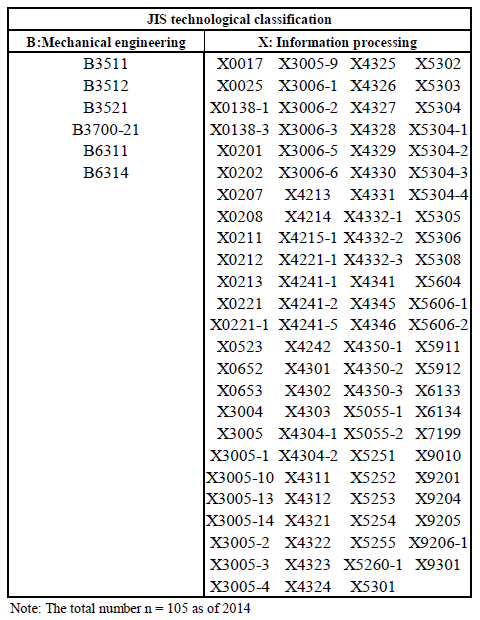

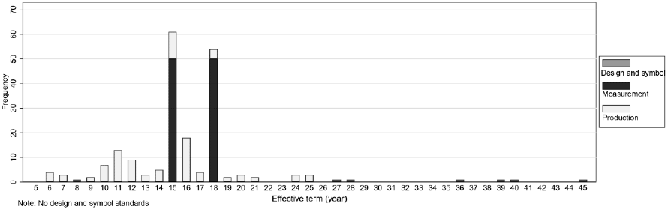

(2) The author is currently creating a technical classification for AI-related technology and reclassifying the Japanese Industrial Standards (JIS) based on new classifications. There are about 100 active and withdrawn AI-related standards as of 2014 (Table 1), and the distribution of effective terms of those standards is presented (Figure 1) [4].

(3) A list of AI-related standards (Table 1) can be downloaded from the homepage of RIETI (https://www.rieti.go.jp/en/publications/summary/18080001.html) for academic and educational purposes.

[Click to enlarge]

(4) The JIS, ISO, and IEC systems still do not contain technological classifications for AI (as of 2018).

(5) This is also called "marking." There is no substantial difference between labeling and marking—the two expressions refer to the same process. In this context, it is worth pointing out that an "AI mark" has the same meaning and effect as an AI label. Authentication is also performed to confirm whether the good in question qualifies to use the mark or label. The authentication, which can take the form of either self-authentication or third-party authentication, is often used as part of the standards and certification process.