Unprecedented opportunity

Recently, we have frequently encountered such terms as big data, artificial intelligence (AI), and the Internet of Things (IoT) revolution in daily media reports or on social networking sites (SNS). The importance of such emerging technologies is widely recognized even among those with an aversion to numbers and statistics (and data science). In my previous article for the RIETI Column (Konishi 2014), I provided an overview of a boom in big data. At the time, I strongly hoped that another emerging boom in statistics (and data science) would be a lasting one. As it turns out, statistics (and data science) found a role for itself in today's world as a tool for machine learning, a data analysis method attracting much attention amid the ongoing AI boom, and that for evidence-based policymaking (EBPM). What started as vague interest in data science is now focused on this particular aspect as a tool indispensable for AI and EBPM.

Meanwhile, the AI boom that started in 2012 shows no signs of fading, as AI continues to evolve and find its way into our everyday lives. Why is the AI boom this time around—the third one—lasting so long?

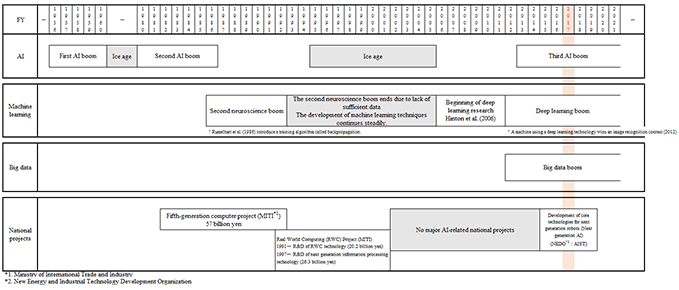

The timeline in the Figure, taken from Konishi and Motomura (2017), shows the development of AI, machine learning, big data, and relevant large-scale national projects over the years. In the first half of the second AI boom, machine learning—in which computers learn from data without being explicitly programmed—was not linked with AI technologies, and thus, the construction of information systems involved an enormous amount of human work, i.e., writing programs for computers to perform specific tasks and processes. The latter half of the second AI boom overlapped with the second boom in neuroscience, a period in which numerous theoretical and applied studies on neural networks were carried out. However, as the processing capacity of computers was rather limited and large-volume data—such as those referred to as big data—were not available, neuroscience at this stage failed to achieve a sufficiently high level of precision, ushering in the ice age of neuroscience in the first half of the 1990s.

As shown in the Figure, AI technologies and neural networks had no major boom from the 1990s through the first half of the 2000s, and so did relevant large-scale national projects from the first half of the 2000s through 2015. A key turning point along the way came in 2006 when a group of researchers introduced a new learning algorithm that allows a neural network to have multiple hidden layers connecting the input and output layers. This activated research on deep learning. Then, in 2012, a machine using a deep learning technology won an image recognition contest, attracting attention from all over the world, hence creating a deep learning boom. In parallel with this, Japan's third AI boom began in 2013. Notably, these booms overlap with a big data boom that began in 2012. Furthermore, in May 2015, the Artificial Intelligence Research Center (AIRC), the largest AI research and development (R&D) hub in Japan, was established within the National Institute of Advanced Industrial Science and Technology (AIST). It is undertaking R&D on next-generation AI through March 2020, and industry-government-academia collaboration in AI research once again has been set in motion.

Today, we have everything needed for AI development, ranging from machine learning-based AI technologies, high performance computing, and big data to relevant large-scale projects and industrial application needs. They together offer the greatest ever opportunity and explain the reason why the ongoing boom is so extensive and having an impact on the entire society.

AI literacy and service business operations

AI technologies introduced for use in service business operations are mostly purpose-specific—rather than multipurpose as seen in humanoid robots—and designed to perform and automate specific tasks previously carried out by humans just as well as, or even better than, humans. In my previous article (Konishi 2015), I defined AI literacy as being conscious about whether excessive labor, money, or time is spent carrying out tasks that machine learning and other AI technologies are highly capable of performing, i.e., categorization, repetition, exploration, organization, and optimization. AI is most efficient when used to perform the kind of tasks in which repeating, increasing the number of combinations, and/or spending more time will lead to greater accuracy and/or value.

For instance, suppose there is a project that involves finding and approaching potential customers in order to earn revenue. In classifying the existing customers for this purpose, coming up with several distinctive features or properties per customer would be the maximum that a human worker is capable of doing. However, AI would make it possible to identify several dozen features or properties per customer by segmenting customers based on their purchasing behavior. Meanwhile, an AI system programmed to access information on economic trends or information about certain rival companies would be able to produce a strategy that reflects such information. This is very elementary, but information must be on the network to be accessible to AI, or conversely, everything on the network is accessible as information to AI. Unlike those that have been introduced for use in manufacturing operations, AI and information and communications technologies (ICT) currently available for use in service operations are devices, rather than machines. Those devices—whether wearables or personal computers—can be introduced on a piece-by-piece basis and make a difference, thanks to the accumulation of massive data as well as to the reduced size, refinement, and prevalence of information technology equipment. Against this backdrop, an increasing number of companies—both big and small—are showing willingness to collect big data and utilize AI technologies. However, no matter how convenient they are, it remains our task to determine what technologies to employ for our business processes. It is not easy to gain insights into what it takes under what environment to make a project successful and sustainable.

Perspectives needed to make AI sustainably useful

Konishi and Motomura (2017) reviewed 28 AI projects undertaken by AIST regarding their respective purposes and outcome. Many of them are practical, intended for application in real world service operations, for instance, to improve work processes for providing medical and nursing care services, make service recommendations to clients in the entertainment industry, and optimize commodities logistics. Through this review, we found some answers to our simple question: What kinds of AI projects develop sustainably?

- The purpose of the project and the uses of technology are clear, and benchmark targets can be translated into data.

- Workers on the frontline are highly motivated and have strong needs for AI.

- It is possible to accumulate and integrate data at a relatively low cost via sensors, networks, and the Internet.

- It is possible to collect data continuously by integrating the process into the regular flow of work.

- Knowledge obtained by analyzing data and/or the results of calculation can be used as additional data.

We can see that data play crucial roles in AI projects. Meanwhile, researchers are working to develop various techniques and algorithms collectively referred to as AI technologies. Put into practical applications as soon as made available, these technologies are quick to penetrate. Given the programming techniques and computing environment as they stand today, it is quite easy to improve and utilize existing systems in accordance with the needs of workers. In other words, since data are the key determinant of the performance of machine learning-based AI, the sustainable availability of high quality data is crucial to competitiveness. The quality of data is dependent on frontline workers' ability to collect data, whether at a retail shop or inside a company office. Collecting as much information as possible on the behavior of as many people as possible will enhance the precision of analysis and the value of data.

The initial stage of decision making over whether or not to introduce an AI system is quite important. In selecting a specific AI system out of many available options, it is important to have the perspective of looking at what outcome or value added we intend to generate with this task and asking why we want machines to take this task away from human workers. Just look around your own room. You will see quite a few home appliances that were purchased to improve efficiency or for pure satisfaction but which have been left unused for long time. The same holds true for business and AI investments. We often seek to introduce AI as if doing so were a goal. In order not to fall into this pitfall, we must make sure we know the reason and the purposes for introducing AI.

[Click to enlarge]